All Courses

Stanford CS336: Language Modeling from Scratch

Stanford Online17 lecturesAdvanced

Language models serve as the cornerstone of modern natural language processing (NLP) applications and open up a new paradigm of having a single general purpose system address a range of downstream tasks. As the field of artificial intelligence (AI), machine learning (ML), and NLP continues to grow, possessing a deep understanding of language models becomes essential for scientists and engineers alike. This course is designed to provide students with a comprehensive understanding of language models by walking them through the entire process of developing their own. Drawing inspiration from operating systems courses that create an entire operating system from scratch, we will lead students through every aspect of language model creation, including data collection and cleansing for pre-training, transformer model construction, model training, and evaluation before deployment. Course Website: https://stanford-cs336.github.io/

Course Content

1

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 1: Overview and Tokenization

This session introduces a brand-new course on building language models from scratch. You learn what language modeling is, where it’s used (speech recognition, translation, text generation, classification), and how different modeling families work. The class emphasizes implementing models yourself in Python and PyTorch, plus how to train and evaluate them.

Stanford Onlinebeginner

2

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 2: Pytorch, Resource Accounting

This session teaches two essentials for building language models: PyTorch basics and resource accounting. PyTorch is a library for working with tensors (multi‑dimensional arrays) and can run on CPU or GPU. You learn how to create tensors, perform math (including matrix multiplies), reshape, index/slice, and use automatic differentiation to compute gradients for training.

Stanford Onlinebeginner

3

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 3: Architectures, Hyperparameters

Language modeling means predicting the next token (a token is a small piece of text like a word or subword) given all tokens before it. If you can estimate this next-token probability well, you can generate text by sampling one token at a time and appending it to the history. This step-by-step sampling turns probabilities into full sentences or paragraphs. Good models make these probabilities sharp for likely words and low for unlikely ones.

Stanford Onlinebeginner

4

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 4: Mixture of Experts

The lecture explains why simply making language models bigger (more parameters) helped for years, but also why data size and training time matter just as much. From BERT in 2018 to GPT‑2, GPT‑3, PaLM, Chinchilla, and Llama 2, the trend shows performance rises when models are scaled correctly with enough data and compute.

Stanford Onlineintermediate

5

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 5: GPUs

GPUs (Graphics Processing Units) are critical for deep learning because they run thousands of simple math operations at the same time. Language models like Transformers rely on huge numbers of matrix multiplications, which are perfect for parallel processing. CPUs have a few strong cores for complex, step-by-step tasks, while GPUs have many simpler cores for doing lots of math in parallel. Using GPUs correctly can make training and inference dramatically faster.

Stanford Onlinebeginner

6

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 6: Kernels, Triton

Modern language models are expensive to run because they perform many matrix multiplications. The main cost comes from both compute and moving data in and out of GPU memory. Optimizing the low-level code that runs these operations can make inference and training much faster and cheaper.

Stanford Onlineintermediate

7

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 7: Parallelism 1

This lesson teaches two big ways to train neural networks on many GPUs: data parallelism and model parallelism. Data parallelism copies the whole model to every GPU and splits the dataset into equal shards, then averages gradients to take one update step. Model parallelism splits the model itself across GPUs and passes activations forward and gradients backward between them.

Stanford Onlineintermediate

8

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 8: Parallelism 2

This session explains how to speed up and scale training when one GPU or a simple setup is not enough. It reviews data parallelism (split data across devices) and pipeline parallelism (split model across devices), then dives into practical fixes for their main bottlenecks. The key tools are gradient accumulation, virtual batch size, and interleaved pipeline stages. You’ll learn the trade‑offs between memory use, communication overhead, and idle time.

Stanford Onlineintermediate

9

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 9: Scaling Laws 1

Scaling laws are empirical rules that show how a model’s loss (error) drops as you grow model size, data, or compute. They take a power-law form: Loss = A × N^(-α), where N can be parameters, data tokens, or compute, and α is the scaling exponent. This lets us predict how bigger models might perform without training them.

Stanford Onlineintermediate

10

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 10: Inference

This session explains how to use a trained language model to produce outputs, a phase called inference. It covers three task types—conditional generation, open-ended generation, and classification—each with different input/output shapes that affect decoding choices. The lecture then dives into decoding methods, which are strategies to choose the next token step by step. Finally, it discusses how to evaluate generated text using human judgments and automatic metrics, along with their trade-offs.

Stanford Onlineintermediate

11

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 11: Scaling Laws 2

Scaling laws relate a model’s log loss (how surprised it is by the next token) to three knobs: number of parameters (N), dataset size (D), and compute budget (C). As you increase N, D, and C, loss usually drops smoothly. But this only holds when you keep many other things steady and consistent.

Stanford Onlineintermediate

12

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 12: Evaluation

Evaluation tells us how good a language model really is. There are two big ways to judge models: intrinsic (measure the model directly) and extrinsic (measure it through real tasks). Intrinsic is fast and clean but might not reflect real-world usefulness. Extrinsic is realistic and practical but slow and complicated to run.

Stanford Onlineintermediate

13

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 13: Data 1

This class explains why data is the most important part of building language models. You learn where text data comes from (books, the web, and human feedback) and what each source is good and bad at. The instructor stresses that most of your time in real projects goes into finding, collecting, cleaning, and filtering data, not model code.

Stanford Onlineintermediate

14

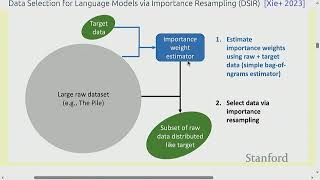

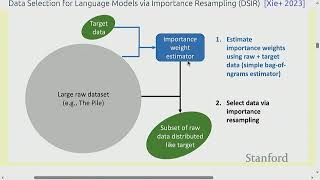

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 14: Data 2

The lecture explains why rare words are a core challenge in language modeling. Most corpora follow Zipf’s law, where a few words appear very often and a huge number appear very rarely. Rare words make probability estimates unreliable and inflate vocabulary size, which increases memory and slows training and inference.

Stanford Onlineintermediate

15

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 15: Alignment - SFT/RLHF

Alignment means teaching a pre-trained language model to act the way people want: safe, helpful, and harmless. A pre-trained model is like a bag of knowledge with no idea how to use it, so it may hallucinate or say unsafe things. Alignment adds an outer layer of behavior so the model answers clearly, avoids harm, and respects user intent.

Stanford Onlineintermediate

16

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 16: Alignment - RL 1

This session introduces alignment for language models and why next‑token prediction alone is not enough. When models only learn to guess the next word, they can hallucinate facts, produce toxic or biased text, and follow tricky prompts the wrong way. Alignment aims to make models helpful, honest, and harmless so they do what people actually want. The lecture lays out a practical recipe to achieve this with RLHF (Reinforcement Learning from Human Feedback).

Stanford Onlineintermediate

17

Stanford CS336 Language Modeling from Scratch | Spring 2025 | Lecture 17: Alignment - RL 2

This session continues alignment with reinforcement learning for language models. It recaps reward hacking—when a model chases the reward in the wrong way, like writing very long answers if reward is tied to word count. The RLHF pipeline is reviewed: pre-train a model, gather human preference data, train a reward model, then fine-tune the policy using RL with a safety constraint. The main focus is how to optimize the policy while staying close to the original model using techniques like KL penalties, PPO, and DPO.

Stanford Onlineintermediate