🎬AI Lectures40

Difficulty:

ML

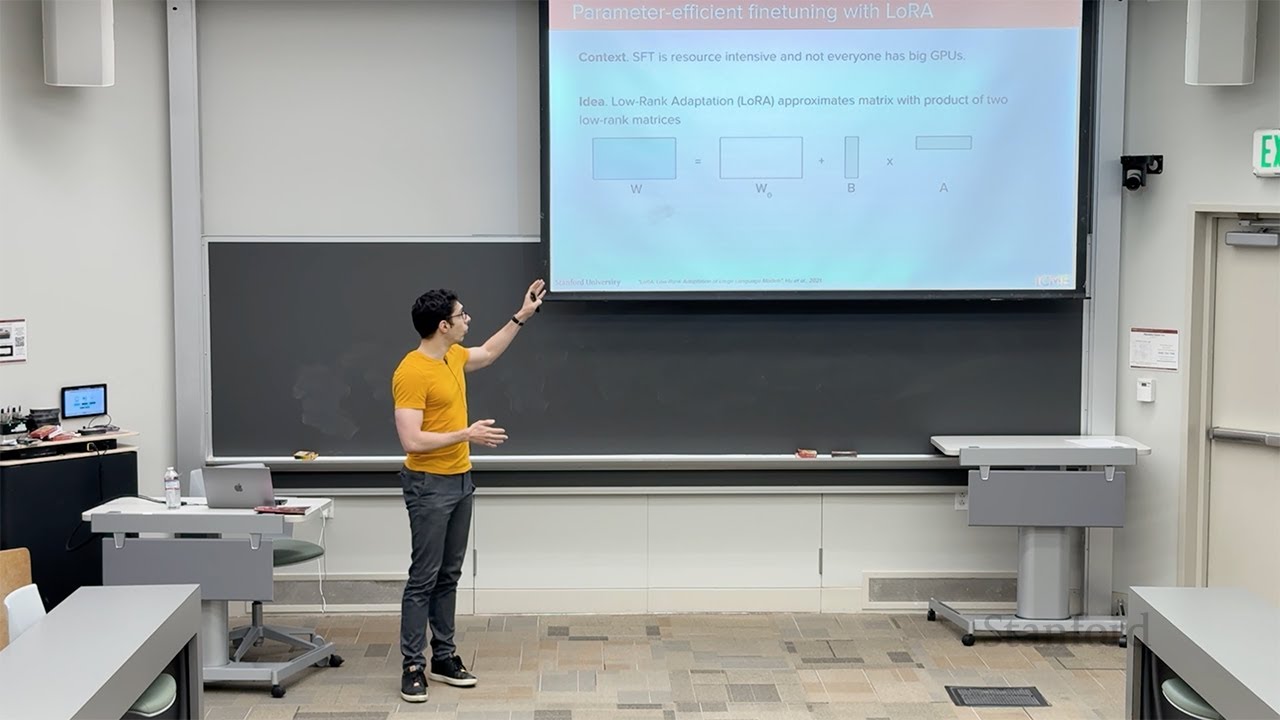

MLStanford CME295 Transformers & LLMs | Autumn 2025 | Lecture 5 - LLM tuning

BeginnerStanford

Regularization is a method to prevent overfitting by adding a penalty for model complexity. Overfitting happens when a model memorizes training data, including noise, and performs poorly on new data. By discouraging overly complex patterns, regularization helps the model generalize better.

#regularization#ridge regression#lasso