🎬AI Lectures7

Difficulty:

Deep Learning

Deep LearningStanford CME295 Transformers & LLMs | Autumn 2025 | Lecture 4 - LLM Training

BeginnerStanford

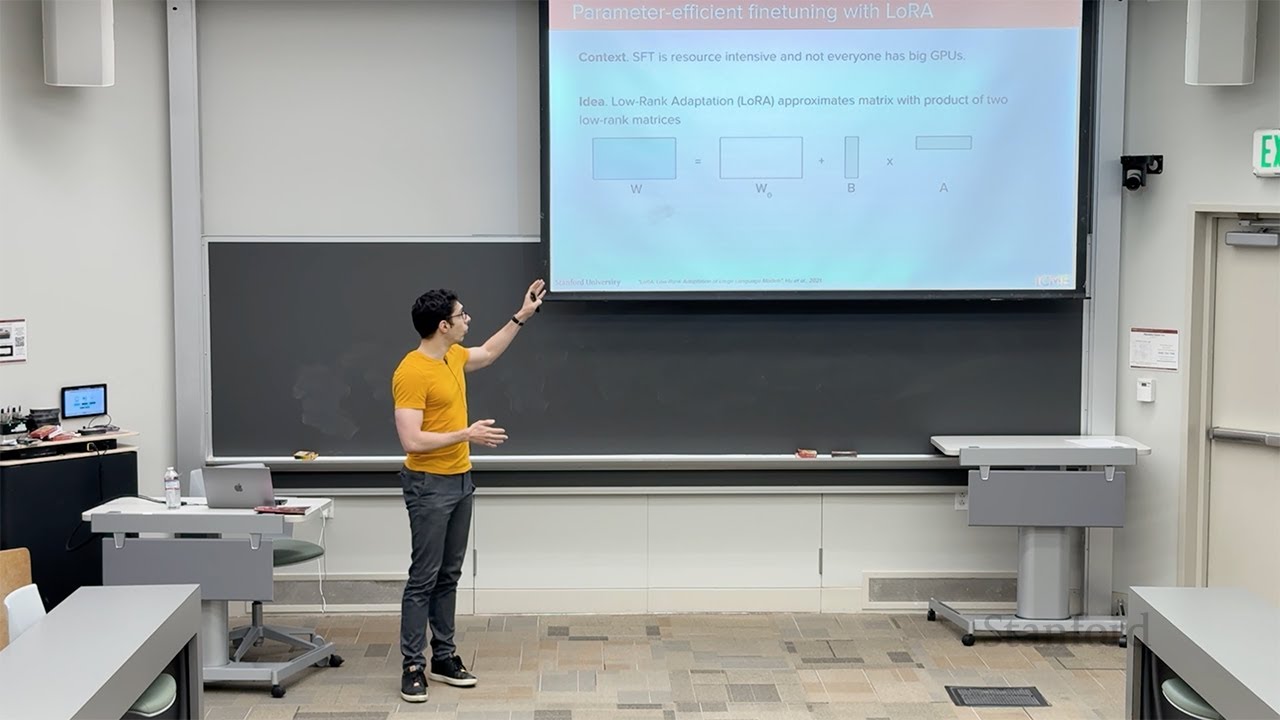

This lecture explains how we train neural networks by minimizing a loss function using optimization methods. It starts with gradient descent and stochastic gradient descent (SGD), showing how we update parameters by stepping opposite to the gradient. Mini-batches make training faster and add helpful noise that can escape bad spots in the loss landscape called local minima.

#gradient descent#stochastic gradient descent#mini-batch