🎬AI Lectures18

Difficulty:

Basics

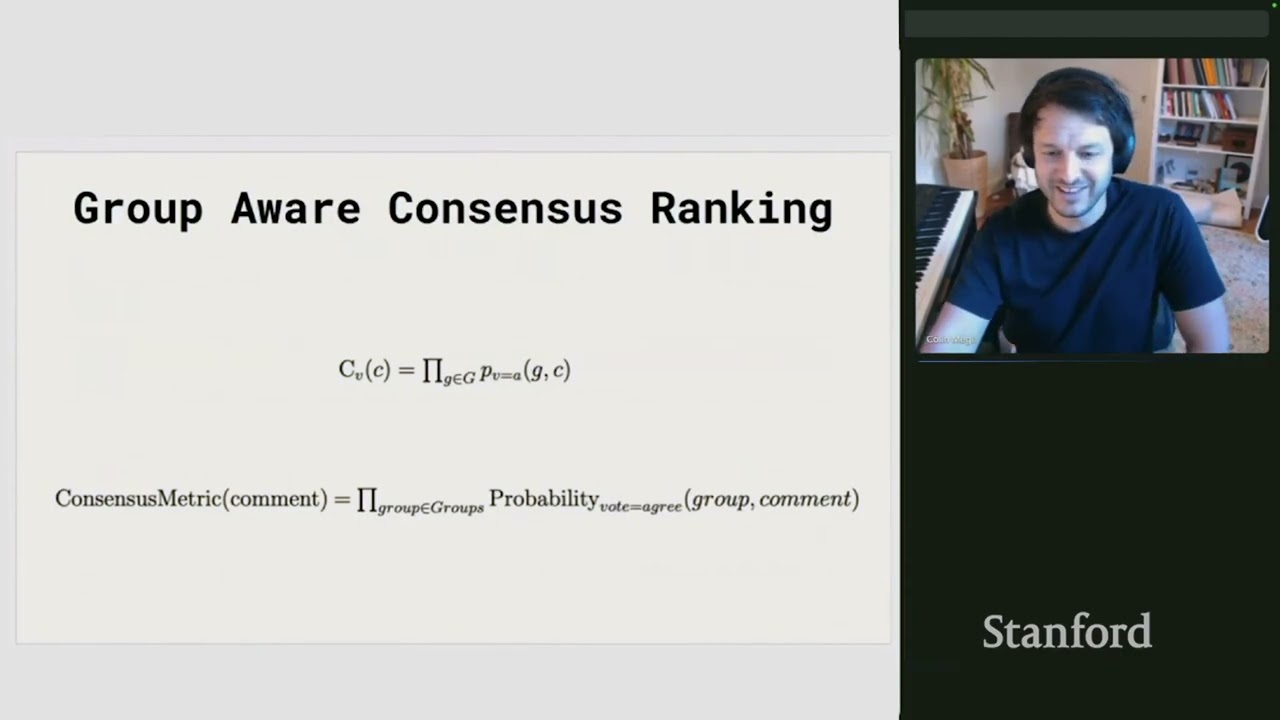

BasicsStanford CS230 | Autumn 2025 | Lecture 8: Agents, Prompts, and RAG

BeginnerStanford

This session sets up course logistics and introduces core machine learning ideas. You learn when and how class meets, where to find materials, how grading works, and why MATLAB is used. It also sets expectations: the course is challenging, homeworks are crucial, and live attendance is encouraged.

#machine learning#supervised learning#unsupervised learning