🎬AI Lectures55

Difficulty:

Basics

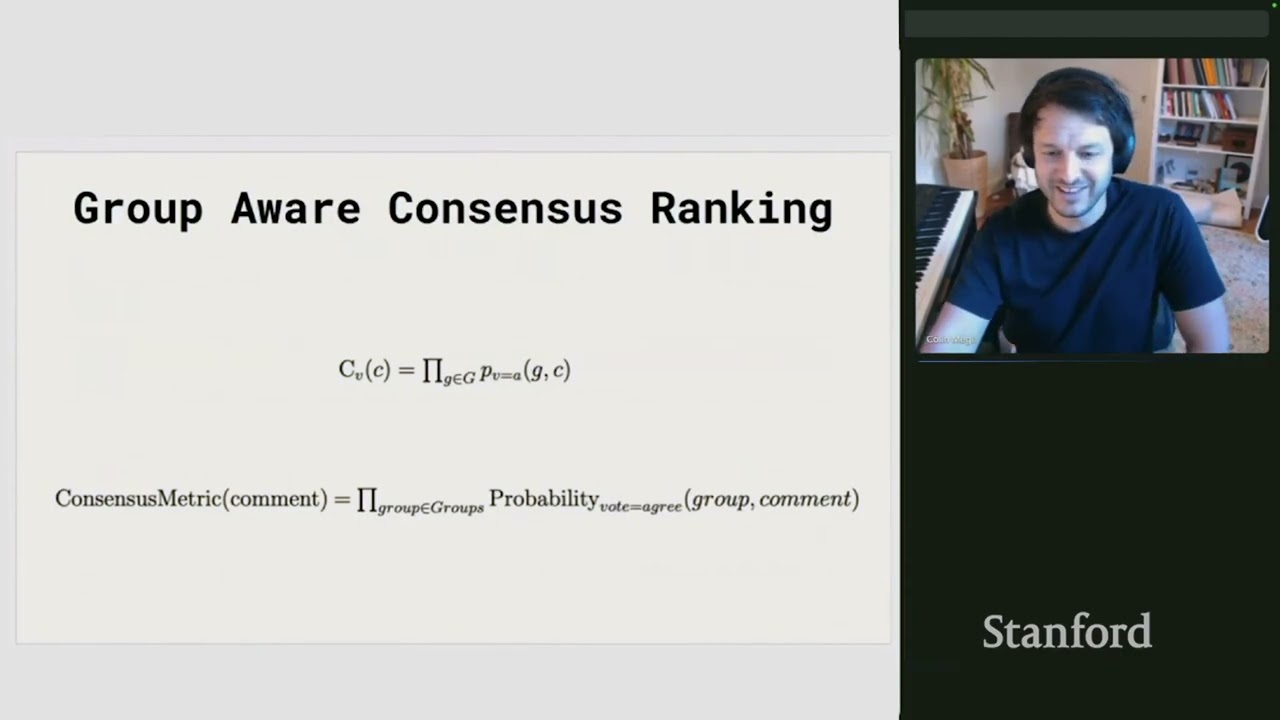

BasicsStanford CME295 Transformers & LLMs | Autumn 2025 | Lecture 3 - Tranformers & Large Language Models

BeginnerStanford

Artificial Intelligence (AI) is the science of making machines do tasks that would need intelligence if a person did them. Today’s AI mostly focuses on specific tasks like recognizing faces or recommending products, which is called narrow AI. A future goal is general AI, which would do any thinking task a human can, but it does not exist yet.

#artificial intelligence#narrow ai#general ai